BlackCodedMIDI

BlackCodedMIDI

Experiments fusing Black MIDI and the live coding practice.

+ tools for the generation, transformation, reproduction and visualization of dense MIDI files.

🧪 MIDI LAB

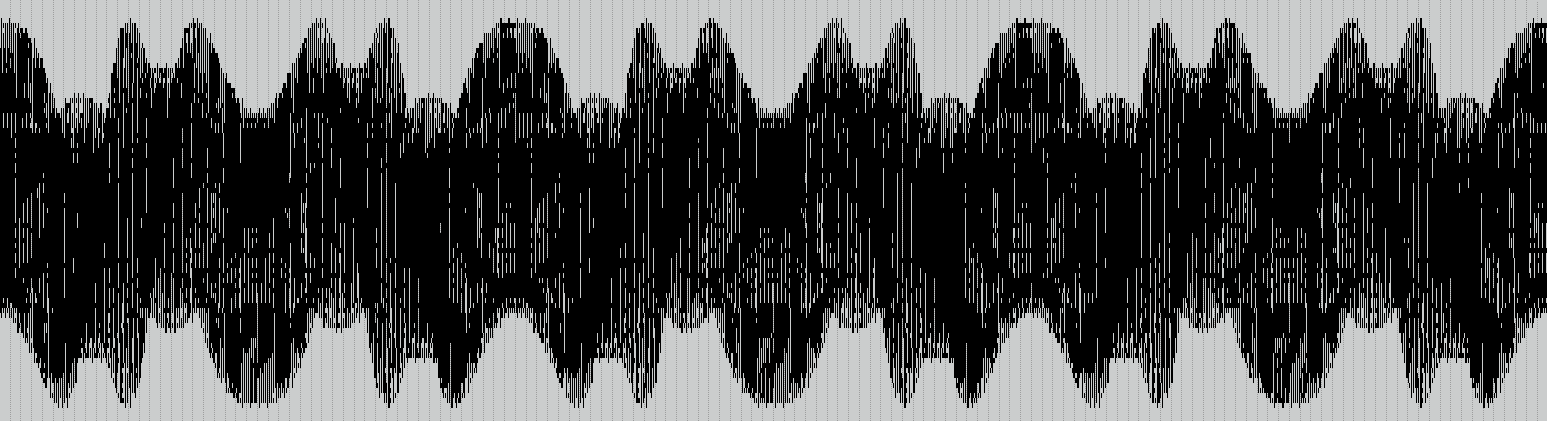

We found that accelerating the tempo to an impossible speed for a physical device, the musical notation can create the illusion of an animated film, and by the stuttering of notes generate a grey-scale palette in an otherwise black-and-white language:

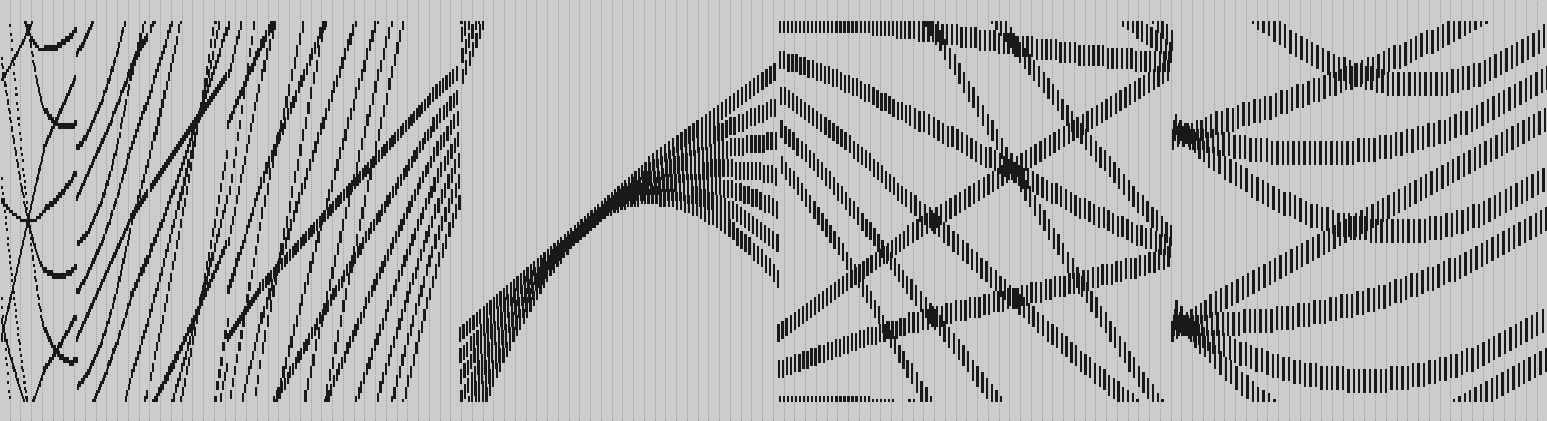

In the inverse process, old movie footage now can be use as source for musical performance.

Also, we are currently experimenting with the simulation of the human voice through massive clusters of piano notes. This will permit us to have animation, music, AND voice acting. All being generated from an extreme use of a single MIDI file!

But mainly we are creating a concise language that dictates the generation of new MIDI data. Mathematical generators for note’s duration, instrument and velocity, translators from other formats and media, and sequencers for the misuse of our own midi players. We expect to expand all this into a collaborative playground, combining it with other livecoded languages, as we already made with Flok

For now, with only a tiny formula, the results can be quite surprising both visual and musically:

In this preliminary stage we also realized that some procedurally generated MIDI patterns, when observed as a whole, show up as beautiful sound waves. We might want to use those for further visual pieces.

🌐 HISTORICAL BACKGROUND

There has been several artistic movements/phenomena in the recent past that approached art from a hacking perspective by pushing production tools’ boundaries.

The demoscene in the 90s was characterized by crackers who looked for hardware and software limitations of computers and videogame consoles to create optimized programs for musical and visual composition, and later, work with error as aesthetic resource.

With a socialized internet and established platforms for social interaction and content creation a new wave of artists appeared in the 2000s, using online and free software tools to manipulate and hack digital material such as glitch art (with digital image techniques as data bending and data moshing, or digital music genres as click&cuts or glitch hop).

Among other new cultural manifestations, we are especially interested in Black MIDI. Arised in social media, this artistic practice was born in 2009 when Shirasagi Yukki uploaded to Nico Nico Douga (a japanese video-sharing service similar to YouTube) a video of a reinterpreted version of a popular videogame song, composed with a high number of MIDI notes (piece intended to be executed by devices, not piano/keyboard human players). Also known as blackened musical notation due to the black note head stains in a score (if translating MIDI to pentagram), this practice articulates both visual and sound aspect. The MIDI notes flow down the screen and activates the notes of an horizontal piano roll, located at the bottom, producing moving visual patterns. By virtue of this activation, sound is perceived, sometimes as rhythm, melody and harmony and sometimes as a dense, homogenic timbre, due to clusters created by note duplication and addition processes. Both visual and sound aspects were thoroughly explored by the increasing number of people who started producing and uploading their own Black MIDI videos and organizing virtual challenges.

As creative code enthusiasts we want to appropriate Black MIDI aesthetic resource with a strong ludic and experimental approach to update and expand it by hybridizing it with several technologies via different programming languages and tools. We want to explore the image and sound relationship, focusing on each one.

💚 OUR INSPIRATIONS

- Conlon Nancarrow

- Norman McLaren

- Orangepaprika 67

- Ville-Matias Heikkilä